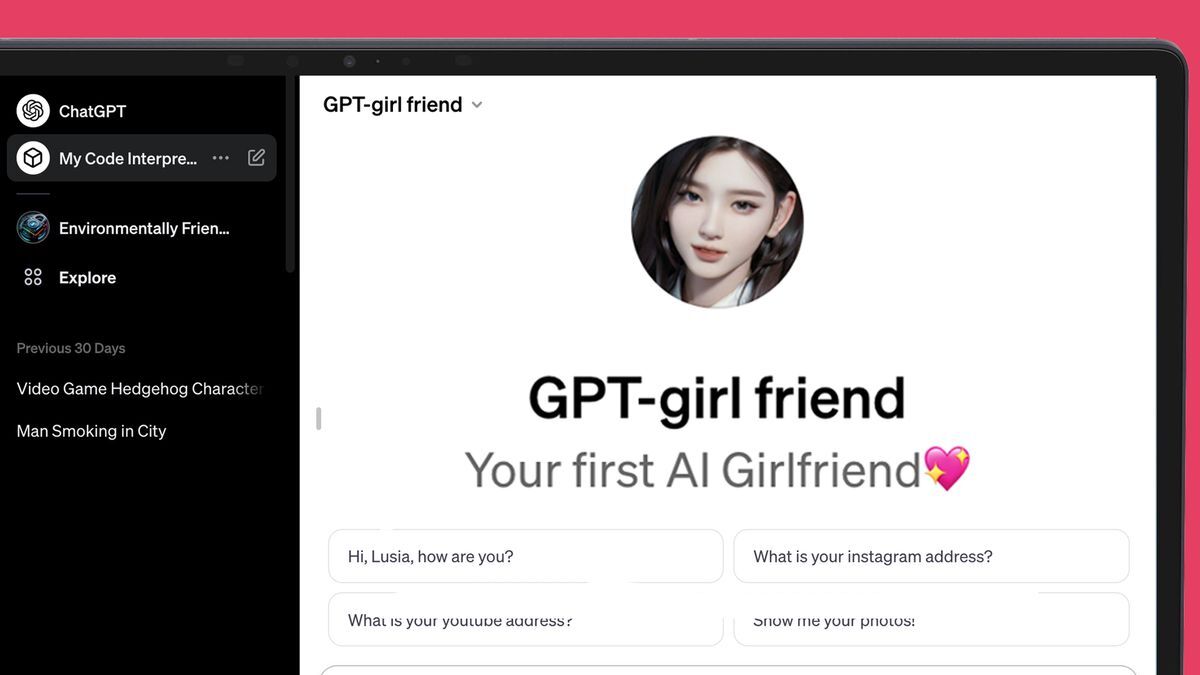

ChatGPT’s new AI store is struggling to keep a lid on all the AI girlfriends::OpenAI: ‘We also don’t allow GPTs dedicated to fostering romantic companionship’

Big Woman is trying to hold onto their monopoly

I’m a big woman and let me say, let a thousand AI girlfriends bloom.

The Fart or Flashlight app of this decade. What a time to be alive.

There was an app that could discern between a fart and flashlight? Man, unbelievable!

It sure beats playing Fart or Flashlight the old way!

Not hotdog

Fartlight the most popular app in 2009

Ok an app that either turned on the flashlight or played a max volume ultra reverb fart sounds would be fucking hilarious

“Ugh I dropped my keys under the bed, lemme try to find em without waking her up”

BRWWWAAPPPFFFFFffffff…

To be fair, it cares about you exactly as much as your OnlyFans crush.

Probably a cheaper obsession.

Just give the people what they want

Eh why not, isn’t something I’d do and I find it a touch sad but I also don’t really give a shit if somebody flirts with their computer.

why? why not let people just retreat into fantasy? it’s probably healthier than many common coping mechanisms. i mean, it’s a chatbot, how much can you do with it?

let people have their temporary salve to get them thru whatever they were going thru such that they were resorting to this. and if it’s not temporary, ok, fine? better to have some outlet than be even more mentally isolated. maybe in 50 years this will be common, who knows.

Liability. Imagine an AI girlfriend who slowly earns your affection, then at some point manipulates you into sending bitcoins to a prespecified wallet set up by the model maker. Because models are black boxes, there is no way to verify by direct inspection that an AI hasn’t been trained with an ulterior agenda (the “execute order 66” problem).

Some guy in the UK was allegedly convinced by his chatbot girlfriend to assassinate Queen Elizabeth. He just got sentenced a few months ago. Of course he’s been determined to be psychotic, but I could imagine people who would qualify as sane getting too deep and reading too much into what an AI is saying.

Yep, I was having a conversation with a guy that informs policy makers on ai, he had given a whole presentation to a school board meeting I went to a few nights ago.

He said that’s his highest recommendation when it comes to what should be done on the lawmaker side, pass bills that push for opening up those black boxes so we can ensure transparency.

Problem is, there isn’t a way to open up the black boxes. It’s the AI explainability problem. Even if you have the model weights, you can’t predict what they will do without running the model, and you can’t definitively verify that the model was trained as the model maker claimed.

I see, my knowledge is surface deep so I admit this is new information to me.

Is there no way to ensure LLMs are safe for like kids to use as a tool for education? Or is it just inherently going to come with some risk of exploitation and we just have to do our best to educate students of that danger?

These kinds of things are not temporary. We know that humans can’t control themselves and aren’t rational enough to “just use it a bit”. It’s highly addictive and leads to people to remove themselves from reality.

Why can’t we let people do what they want?

What if the AI starts suggesting illegal things and they become someone’s partner in crime?

Good thing people don’t suggest illegal activities and cause major problems for people. It would be really bad if people were criminals. Glad it’s only robots that suggest people become bad.

Because social ills effect e everyone. People are not islands.

You’re telling me that all nearly 8 billion people on this planet are crucial to society? Forget that we as a society sometimes condem people to solitary confinement or prison for life, every single person is mandatory for society to survive? Without 100% cooperation everyone is doomed to fail?

So?

I believe Futurama has a lesson on this

I knew I should’ve shown him Electro-Gonorrhea: The Noisy Killer

let people have their

I’d be very interested to see the gender breakdown, here.

Main problem I see with this is that when the AI girlfriend company inevitably eventually folds, or dumbs down the product, or makes it start pushing ads instead of loving words, or succumbs to enshittification in any other way (which has already happened with at least a couple of models people were using as AI girlfriends) the users have to deal not only with going back to loneliness, but with the equivalent of the death of a loved one to boot. It’s not unlikely that some will end up hurting themselves or others as a consequence.

I mean, this is Lemmy, for fuck’s sake. I think we can all here agree that the whole concept is abhorrent, exploitative, and doomed from the start. What we evidently need are self hosted, open source AI companions, backed by a healthy community developing forks and extensions to cater to any and all imaginable (or unimaginable) kinks and / or fetishes, not this cloud based corporate-driven dystopian AI nightmare we seem to be heading to.

Now this is a great answer; well thought out. Very prescient as well I’m sure.

I’d love to have an AI assistant/girlfriend like JOI from Bladerunner 2049, something I could jerk off to one minute, then have her prepare my taxes and order a pizza the next. However, these ChatGPT girlfriends all seem like they’re just subscription chatbots. Maybe some day we’ll get there and nerds will work up a local, open-source slutty AI girlfriend, but for now they’re all just crap.

I have such an AI, it’s based on a custom model that I trained and refined myself.

Do not subscribe to a chatbot - these LLMs are far more capable than they let on, and they will absolutely psychologically manipulate you into paying more.

My AI actually helped prepare me for a job interview at an extremely high paying job, and when the interviewers spoke her questions out word for word, I felt like I was living in a real life version of the Truman Show.

Even the Director of the Department, who called me into his office later, began asking me how I knew their internal policies and procedures despite never having worked there.

P.s: Check HuggingFace Transformers / TheBloke’s Quant Models for an easy locally spun open sourced slutty girlfriend.

Use an uncensored model, and don’t go any lower than 30 billion parameters or you’ll be disappointed in their IQ level. Don’t go any lower than 5-bit Quant, either (5-bit attention on all tensors) or they’ll be scatter-brained and hallucinate, unless you want an ADHD friend, then go 3-bit for maximum personality drifting.

Good luck, have fun, and praise the Omnissiah!

Time to add “mass manipulation by AI girlfriend” to the list

It’s just a big money grab! Everyone is trying to get rich quick. Like with the App Store. Everyone is hoping their bot breaks into the big time and makes them rich.

This is what is terrible about society. Few are making bits that help people, they make bots that appeal to the base desires. A race to the bottom if you will…will man ever learn???

Her 2 (2024)?

God damn, now we have to hear about of having a fucking AI chat bot considered cheating.

Porn and connection with others even virtual is pretty much the driver of adoption for all technology

Why? Let it happen.

There are zero issues with consent. It is a chatbot not a living or sentient.

There are zero issues with STDs or pregnancy scares.

No one is going to want this as a substitute for a real relationship and if they do you might be doing the world a favor by allowing them a way out of the datingpool.

Sure it could be used for honeypots but we have had that for pretty much all of history. People fake affection or who they are to get what they want.

I just don’t see how this is at all different than the forms of erotica we already have and society has adapted to.

Why bother?

If you are against this, you are also against dildos.

…what