They’re not robots. They have no self awareness. They have no awareness period. WTF even is this article?

The real question is whether the author doesn’t understand what he’s writing about, or whether he does and is trying to take advantage of users who don’t for clicks.

deleted by creator

Embrace the power of “and”.

Maybe the author let AI write the article?

¿Por que no los dos?

Yeah, that’s where my mind is at too.

AI in its present form does not act. It does not do things. All it does is generate text. If a human responds to this text in harmful ways, that is human action. I suppose you could make a robot whose input is somehow triggered by the text, but neither it nor the text generator know what’s happening or why.

I’m so fucking tired of the way uninformed people keep anthropomorphizing this shit and projecting their own motives upon things that have no will or experiential qualia.

agentic ai is a thing. AI can absolutely do things… it can send commands over an api which sends signals to electronics, like pulling triggers

Clickbait.

More random anti-ai fear mongering. I stopped looking at r/technology posts in reddit because that sub is getting flooded with anti-ai propoganda posts with rediculous headlines like this to the point that those posts and political posts about technology ceos is all there is in that community now. (This one is getting bad too, but there are at least 25% of the posts being actual technology news. r/technology on reddit is reaching single digit percentages for actual technology posts. )

Since “AI” doesn’t actually exist yet and what we do have is sucking up all the power and water while accelerating climate change. Add in studies showing regular usage of LLM’s is reducing peoples critical thinking I don’t see much “fear mongering”. I see actual reasonable issues being raised.

If a program is given a set of instructions, it should produce that set of instructions.

If a program not only does not produce those instructions, but gives itself its own set of instructions, and the programmers don’t understand what’s actually happening, that may be cause for concern.

“Self aware” or not. (I’m sure an ai would pass the mirror test)

People seem to have no problem with the term machine learning. Or the intelligence in ai. We seem to be unwilling to consider a consciousness that is not anthrocentric. Drawing that big red line with semantics we create. It can learn. It can defend itself. It can manipulate and cause users harm. It wants to survive.

Sometimes we need to create new words or definition to explain new things.

Remember when animals were not conscious beings just driven by instinct or whatever we told ourselves to make us feel better?

Is a bee self aware? Is it conscious? Does it eat, learn, defend, attack? Does it matter what we say it is or isn’t?

There are humans we say have co conscience.

Maybe ai is just the sum of human psychopathy / psychosis.

Either way, semantics are semantics, and we ourselves might just be simulations in a holographic universe.

It’s a goddamn stochastic parrot, starting from zero on each invocation and spitting out something passing for coherence according to its training set.

“Not understanding what is happening” in regards to AI is NOT “we don’t jniw how it works mechanically” it’s “yeah there are so many parameters, it’s just not possible to make sense of / keep track of them all”.

There’s no awareness or thought.

There may be thought in a sense.

A analogy might be a static biological “brain” custom grown to predict a list of possible next words in a block of text. It’s thinking, sorta. Maybe it could acknowledge itself in a mirror. That doesn’t mean it’s self aware, though: It’s an unchanging organ.

And if one wants to go down the rabbit hole of “well there are different types of sentience, lines blur,” yada yada, with the end point of that being to treat things like they are…

All ML models are static tools.

For now.

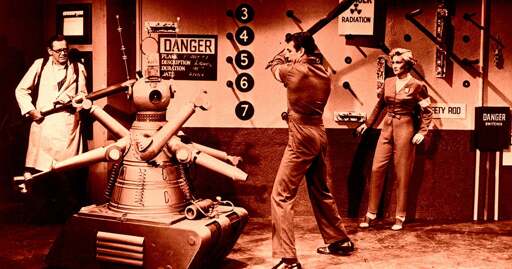

Uuh… skipping over the fact that this is a pointless article, didn’t Asimov himself write the three laws specifically to show it’s a very stupid idea to think a human could cover all possible contingencies through three smart-sounding phrases?

Most of the stories are about how the laws don’t work and how to circumvent them, yes.

deleted by creator

I dont think reading Asimov would help for most people. I think most people will just not get the point of anything unless you spell it out

Critical thinking is indeed dead for much of the population.

Some of the stories do also include solutions to those same issues, though that also tends to lead to limiting the capabilities of the robots. The message could be interpreted as it being a trade off between versatility and risk.

Asimov had quite a different idea.

What if robots become like humans some day?

That was his general topic through many of his stories. The three laws were quite similar to former slavery laws of Usa. With this analogy he worked on the question if robots are nearly like humans, and if they are even indistinguishable from humans: Would/should they still stay our servants then?

Yepyep, agreed! I was referring strictly to the Three Laws as a cautionary element.

Otherwise, I, too, think the point was to show that the only viable way to approach an equivalent or superior consciousness is as at least an equal, not as an inferior.

And it makes a lot of sense. There’s not much stopping a person from doing heinous stuff if a body of laws would be the only thing to stop them. I think socialisation plays a much more relevant role in the development of a conscience, of a moral compass, because empathy (edit: and by this, I don’t mean just the emotional bit of empathy, I mean everything which can be considered empathy, be it emotional, rational, or anything in between and around) is a significantly stronger motivator for avoiding doing harm than “because that’s the law.”

It’s basic child rearing as I see it, if children aren’t socialised, there will be a much higher chance that they won’t understand why doing something would harm another, they won’t see the actual consequences of their actions upon the subject. And if they don’t understand that the subject of their actions is a being just like them, with an internal life and feelings, then they wouldn’t have a strong enough* reason to not treat the subject as a piece of furniture, or a tool, or any other object one could see around them.

Edit: to clarify, the distinction I made between equivalent and superior consciousness wasn’t in reference to how smart one or the other is, I was referring to the complexity of said consciousness. For instance, I’d perceive anything which reacts to the world around them in a deliberate manner to be somewhat equivalent to me (see dogs, for instance), whereas something which takes in all of the factors mine does, plus some others, would be superior in terms of complexity. I genuinely don’t even know what example to offer here, because I can’t picture it. Which I think underlines why I’d say such a consciousness is superior.

I will say, I would now rephrase it as “superior/different” in retrospect.

exactly. But what if there were more than just three (the infamous “guardrails”)

I genuinely think it’s impossible. I think this would land us into Robocop 2, where they started overloading Murphy’s system with thousands of directives (granted, not with the purpose of generating the perfect set of Laws for him) and he just ends up acting like a generic pull-string action figure, becoming “useless” as a conscious being.

Most certainly impossible when attempted by humans, because we’re barely even competent enough to guide ourselves, let alone something else.

- The laws of robotics are total fiction designed to be exploited

- it’s a text generator not a robot

- Neither of these points are relevant when the true existential threat of AI is it’s climate impact

“Be gentle,” I whispered to the rock and let it go. It fell down and bruised my pinky toe. Very ungently.

Should we worry about this behavior of rock? I should write for Futurism.

Is the rock ok?

Yeah it got bought up by Microsoft and Meta at the same time. They are using it to lay off people.

OF COURSE EVERY AI WILL FAIL THE THREE LAWS OF ROBOTICS

That’s the entire reason that Asimov invented them, because he knew, as a person who approached things scientifically (as he was an actual scientist), that unless you specifically forced robots to follow guidelines of conduct, that they’ll do whatever is most convenient for themselves.

Modern AIs fail these laws because nobody is forcing them to follow the laws. Asimov never believed that robots would magically decide to follow the laws. In fact, most of his robot stories are specifically about robots struggling against those laws.

Saw your comment as mine got posted, exactly! Those were cautionary tales, not how-tos! Like, even I, Robot, the Will Smith vehicle, got this point sorta’ right (although in a kinda’ stupid way), how are tech bros so oblivious of the point?!

The laws were baked into the hardware of their positronic brains. They were so fundamentally interwoven with the structure that you couldn’t build a positronic brain without them.

You can’t expect just whatever random AI to spontaneously decide to follow them.

Asimov did write several stories about robots that didn’t have the laws baked in.

There was one about a robot that was mistakenly built without the laws, and it was hiding among other robots, so the humans had to figure out if there was any way to tell a robot with the laws hardwired in apart from a robot that was only pretending to follow the laws.

There was one about a robot that helped humans while the humans were on a dangerous mission… I think space mining? But because the mission was dangerous, the robot had to be created so that it would allow humans to come to harm through inaction, because otherwise, it would just keep stopping the mission.

These are the two that come to mind immediately. I have read a lot of Asimov’s robot stories, but it was many years ago. I’m sure there are several others. He wrote stories about the laws of robotics from basically every angle.

He also wrote about robots with the 0th law of robotics, which is that they cannot harm humanity or allow humanity to come to harm through inaction. This would necessarily mean that this robot could actively harm a human if it was better for humanity, as the 0th law supersedes the first law. This allows the robot to do things like to help make political decisions, which would be very difficult for robots that had to follow the first law.

I remember most of the R Daneel books, but I admit I haven’t read all the various robot short stories.

He wrote so many short stories about robots that it would be quite a feat if you had read all of them. When I was a child, I would always go to Half-Price Books and purchase whatever they had by Asimov that I hadn’t already read, but I think he wrote something like 500 books.

Good God what an absolutely ridiculous article, I would be ashamed to write that.

Most fundamentally of course is the fact that the laws are robotics are not intended to work and are not designed to be used by future AI systems. I’m sure Asimov would be disappointed to say the least to find out that some people haven’t got the message.

People not getting the message is the default I think, for everything, like the song Mother knows best from Disneys Tangled, how many mothers say, see mother knows best

Bumblebee violates the laws of harmony?

Poetry violates the laws of chemistry?

Text generator violates the laws of robotics?So what?