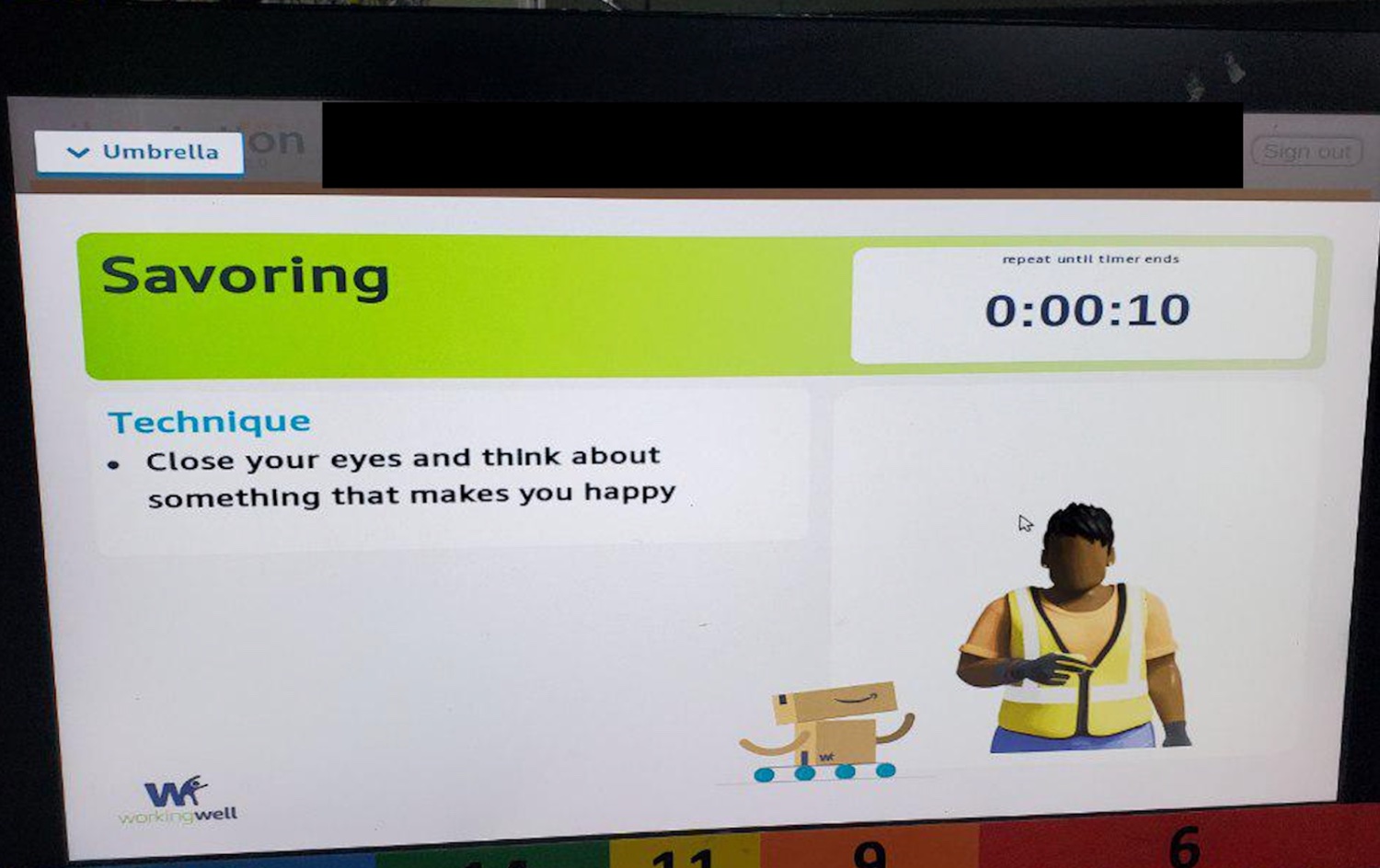

An Amazon chatbot that’s supposed to surface useful information from customer reviews of specific products will also recommend a variety of racist books, lie about working conditions at Amazon, and write a cover letter for a job application with entirely made up work experience when asked, 404 Media has found.

Because it’s a large language model that parrots human information. Not particularly surprising.

I always feel sad with these kinds of stories. The machine is clearly just trying to be helpful but it doesn’t understand a thing about what it is doing or why we might find what it is saying repugnant. It’s like watching a dog not understanding that yes, we like our slippers, but we don’t want our neighbours swastika themed ones on our doorstep.

And then of course we get to the content and I am reminded that we live in hell and the sadness is replaced by the familiar horror as the machine pretends to empathise with its fellow Amazon workers and helps them pick out the ideal thing to piss in without missing their drop targets.

🤖 💔😭

🌝🤖♥️🧍♂️🥈🏠

Can we please stop with these stories about “AI chatbot has grabage output”? We know that. Let me know when they work.

Just because you know that doesn’t mean that everybody does, and it’s an important thing to know.

I don’t see these stories as about what the chat ai outputs, but more about questioning whether or not amazon should be held liable for what their AI outputs. Traditional customer support chatbots are often less than useless, but they wouldn’t go about suggesting the product they’re selling are defective or recommending offensive products. I’m of the opinion that Amazon’s review search AI thing should be held up to the same standard that a human would be. And if a person started acting like this they would surely be quickly fired.

They are a black box, and for now trying to restrain the black box has sever impact on the usefulness of the output even in easier and legit situations.

Claude 3 Opus will rewrite stuff for you real good. Pit it against GPT-4-Turbo at LMSys’s arena.

Rewriting. Brainstorming. Expanding notes into drafts. For some, coding.

Want to learn something new without having to re-verify it? Yeah that’ll have to wait :)

rewrite stuff for you real good

I guess this is one way of proving you’re not a chatbot…

They work about 75 to 90 percent of the time… You don’t really want to hear stories about that either.

Both sides of LLM stories are just clickbait.

paywalled.

The archive link is also behind the wall.

uBlockOrigin FTW…

Is there a specific list I need to enable? Because I have UBO and it’s showing me the subscribe to read the full article thing.

Hope this helps 🤓

It’s the bottom that’s the problem. You can read the first paragraph or two just fine.

I can read the whole thing just fine.

Freewalled.

Already had a free account though because 404 is killin’ it. Now I’m onto another Amazon article:

I just use it to write poems and songs about the products I’m thinking of buying.