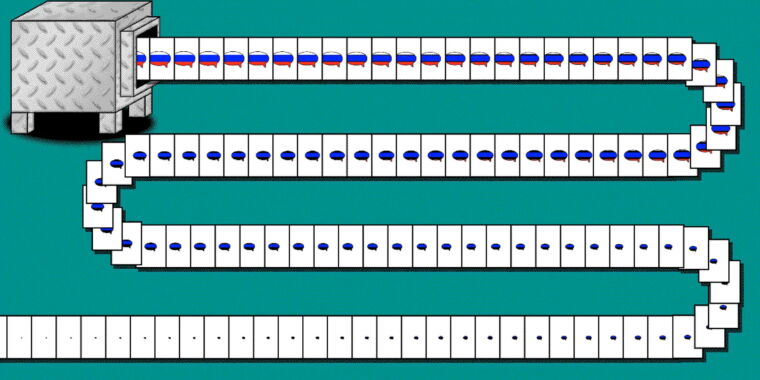

The logical end of the ‘Solution to bad speech is better speech’ has arrived in the age of state-sponsored social media propaganda bots versus AI-driven bots arguing back

Shit. I could have told them to just block lemmygrad for like $100 😂🤣😂

Best I can do is an upvote.

Please tell me how to block a full instance

Just a reminder, LLMs are not designed to provide truth, but rather naturally sounding word generation.

We can certainly argue over what they’re designed to do, and I definitely agree that’s the goal of them. The reality though is that on some level it is impossible to separate assertions from the words that describe them. Language itself is designed to communicate ideas, you can’t really create language without also communicating ideas, otherwise every sentence from an LLM would just look like

“Has Anyone Really Been Far Even as Decided to Use Even Go Want to do Look More Like”

They will readily cite information that was fed to them. Sometimes it is on point, sometimes not. That starts to be a bit of an ethical discussion on whether it is okay for them to paraphrase information they were fed, and without citing it as a source of the info.

In a perfect world we should be able to expand a whole learning tree to trace back how the model pieced together each word and point of data it is citing, kind of like an advanced Wikipedia article. Then you could take the typical synopsis that the model provides and dig into it to judge for yourself if it’s accurate or not. From a research standpoint I view info you collect from a language model as a step down from a secondary source and we should be able to easily see how it gets to that info.

LLMs are at least a quaternary(?) source. They’re scraping secondary/tertiary sources. As such they’re little better than asking passersby on the street. You might get a general idea of what the zeitgeist is, but how true any particular statement actually is will vary wildly.

Math itself is designed to describe relationships between things. That doesn’t mean you can’t mock up a ‘reasonable seeming’ equation that is absolute nonsense after further examination, but that a layman will take as ‘true enough’.

LLMs don’t cite things. They provide an approximation of what a human might write. They don’t know what they’re writing or how it relates to the ‘real world’ any more than any other centerpiece of a Chinese Room.

After WWII in Germany, the cool young people knew you couldn’t trust anyone over 30.

Nowadays, cool people need to understand that you can’t trust anything bland and sanitized-sounding on the internet. For the rest of our lives, your personhood is on trial with everything you say.

It could tear society apart before we even know it’s happening.

Nowadays, cool people need to understand that you can’t trust anything bland and sanitized-sounding on the internet.

This is bad news for my communication style.

Same but kinda not same

Moderate erasure

So is it against Russian disinformation, or is does it make anti Russia disinformation? I’d hope the former, it’s easy enough to refute Russia with correct information.

I know it’s taboo but hear me out - you could read the article and find out

Per the article, it’s the latter.

The tweets, the articles, and even the journalists and news sites were crafted entirely by artificial intelligence algorithms, according to the person behind the project, who goes by the name Nea Paw and says it is designed to highlight the danger of mass-produced AI disinformation.

OpenAI is so concerned that AI will do x and y bad thing but still pour all these resources into developing it further.

There are other endeavors where a great deal of the effort is put into making it safe. Space travel for example.

I wish that was the case for AI development. AI safety is a notoriously underfunded, understaffed and still overall neglected field.

OpenAI isn’t responsible for what Russians do with it anymore than any company is for how users use their product

If someone knows that what they’re about to create is going to do harm like this, they shoulder some of the responsibility for those consequences. They dont just get to wash their hands of it as if they had no idea.

Why not. The people who are to blame are the people commuting the act.

The thing itself has no ethical or moral impact until it’s used by a person. I think it feels good to blame an inventor but that’s scapegoating the real culprits. Only way I see your argument making sense is if they intended their tools to be user for unethical reasons.

Because people should consider the pros and cons of what they work on not just pretend that none of the responsibility for those cons is theirs. AI is one of the things that could wipe out humanity. Not in the terminator sense but through unparalleled distruption of the economy and by facilitating a wedge between people through the production of propaganda like none that weve ever seen. i.e deepfakes, personally tailored propaganda etc.

to wipe out humanity

Does it? Doesn’t that threat exist even without AI. At its current state its a glorified chatbot. Get rid of it, we still have every think tank filled with quants, statisticians, social scientists and marketing teams pushing all that propaganda. Its not AI doing it. Its humans.

But AI does have potential to also develop new medicines. New materials. It has potential for a lot more good.

It also has a lot of potential to give people some powerful pocket access to some basic services they normally wouldn’t have. Imagine an AI trained to help people sort out their finances. Act like an r/askdocs. Help with questions about new hobbies.

So where you see panic, other people see hope. And it isn’t the inventors job to tell you or others how to use something.

If we destroy ourselves with every bit of advancement then we deserve it. It would be an inevitability.

Not to mention that even if one inventor decides not to release their creation, eventually someone else will make something similar.

The incentives to continue development are far too great; if one firm abandons the project, that just means that AI will be developed by a less ethical firm. This is why arguing that AI is bad in-and-of-itself is a moderately effective way to reduce the ethics of the average AI developer.